This is an old revision of the document!

Setting up the Bullet world with a new robot

This tutorial will walk you though creating your own repo / metapackage from scratch that will use CRAM and Bullet world with your own robots. In this tutorial we will use the Boxy robot as an example.

The starting point of this tutorial is an installation of CRAM including projection with PR2. If you followed the installation manual, you should have all the necessary packages already.

Directory / file setup

First of all, let's create a directory for our code and the metapackage: in the src of a catkin workspace create a new directory, we will call it cram_boxy. Therein create a catkin metapackage with the same name:

mkdir cram_boxy && cd cram_boxy catkin_create_pkg cram_boxy && cd cram_boxy

Edit the CMakeLists.txt such that it contains only the metapackage boilerplate code:

cmake_minimum_required(VERSION 2.8.3) project(cram_boxy) find_package(catkin REQUIRED) catkin_metapackage()

The first ROS package we will create will be the Prolog description of our robot. We will call it cram_boxy_description.

Go back to the root of your cram_boxy directory and create the package. The dependencies we will definitely need for now will be cram_prolog (as we're defining Prolog rules) and cram_robot_interfaces (which defines the names of predicates for describing robots in CRAM):

cd ..

catkin_create_pkg cram_boxy_knowledge cram_prolog cram_robot_interfaces

Now let's create the corresponding ASD file called cram_boxy_knowledge/cram-boxy-knowledge.asd:

;;; You might want to add a license header first (defsystem cram-boxy-knowledge :author "Your Name" :license "BSD" :depends-on (cram-prolog cram-robot-interfaces) :components ((:module "src" :components ((:file "package") (:file "boxy-knowledge" :depends-on ("package"))))))

Now create the corresponding src directory and a cram_boxy_knowledge/src/package.lisp file in it:

;;; license (in-package :cl-user) (defpackage cram-boxy-knowledge (:use #:common-lisp #:cram-prolog #:cram-robot-interfaces))

Robot URDF description

Now, before creating the file with the actual code (called boxy-knowledge.lisp as you can see from the cram-boxy-knowledge.asd file), we first need a URDF description of our robot. For Boxy it is located in a repo on Github, so let's clone it into our ROS workspace:

cd ROS_WORKSPACE_FOR_LISP_CODE cd src git clone https://github.com/code-iai/iai_robots.git

Now let's compile our workspace such that ROS learns about the new cram_boxy packages as well as the iai_robots.

CRAM takes the URDF descriptions of the robots from the ROS parameter server, i.e., you will need to upload the URDFs of your robots. For Boxy there is a launch file doing that, you will find it here:

roscd iai_boxy_description/launch/ && ls -l

It's called upload_boxy.launch and has the following contents:

<launch> <arg name="urdf-name" default="boxy_description.urdf.xacro"/> <arg name="urdf-path" default="$(find iai_boxy_description)/robots/$(arg urdf-name)"/> <param name="robot_description" command="$(find xacro)/xacro.py '$(arg urdf-path)'" /> </launch>

As we will need to know the names of the TF frames later, we will launch a general file that includes uploading the URDF as well as a robot state publisher:

roslaunch iai_boxy_description upload_boxy.launch

(It starts a GUI to play with the joint angles but let's ignore that.)

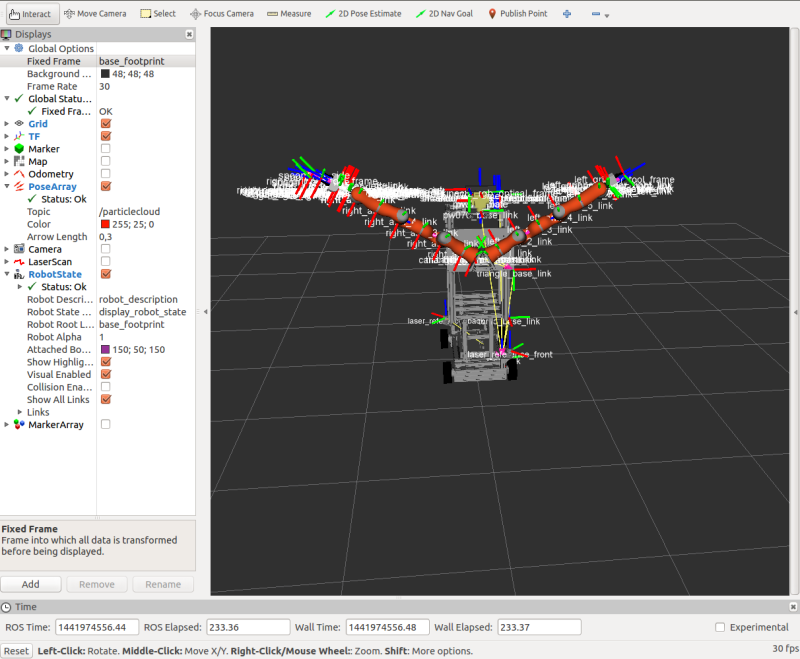

Let's check if it's there using RViz:

rosrun rviz rviz Add -> Robot Model -> Robot description: robot description Add -> TF

To be able to see the TF frames choose base_footprint as the global fixed frame.

Boxy Prolog description

Now that we have a URDF on the ROS parameter server, let's describe our robot also in Prolog.

We create a file cram_boxy/cram_boxy_knowledge/src/boxy-knowledge.lisp and fill it in with the simplest descriptions, which are the ones relevant for navigation and vision. We will leave out manipulation for now as it is too complex for the scope of this tutorial.

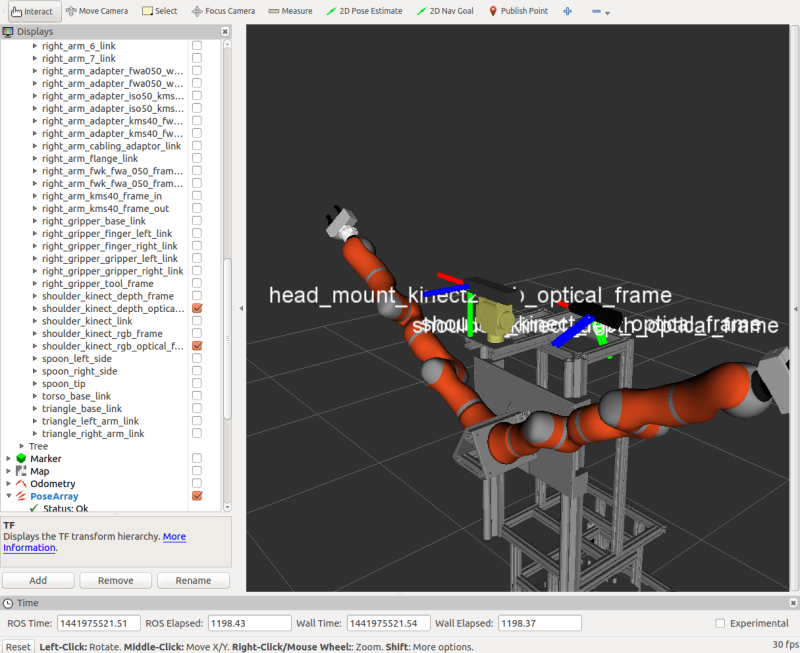

As can be seen from the TF tree, our robot has 3 camera frames, 1 depth and 1 RGB frame from a Kinect camera, and an RGB frame from a Kinect2 camera.

It's camera can be from 1.60 m to 2.10 m above the ground, depending on the torso position. It also has a pan-tilt-unit.

;;; license

(in-package :cram-boxy-knowledge)

(def-fact-group boxy-metadata (robot

camera-frame

camera-minimal-height camera-maximal-height

robot-pan-tilt-links robot-pan-tilt-joints)

(<- (robot boxy))

(<- (camera-frame boxy "head_mount_kinect2_rgb_optical_frame"))

(<- (camera-frame boxy "shoulder_kinect_rgb_optical_frame"))

(<- (camera-frame boxy "shoulder_kinect_depth_optical_frame"))

(<- (camera-minimal-height boxy 1.60))

(<- (camera-maximal-height boxy 2.10))

(<- (robot-pan-tilt-links boxy "pw070_box" "pw070_plate"))

(<- (robot-pan-tilt-joints boxy "head_pan_joint" "head_tilt_joint")))

Loading the world

Now let's try to set up the world. We will only spawn the floor, the robot, and a couple of household objects.

For that, we

- load the bullet reasoning designators package in the REPL

- start a ROS node

- spawn the robot and the floor

<code lisp> (asdf:load-system :cram-bullet-reasoning-designators) (in-package :btr)